The D4H’s newest postdoc, Davide Liga, introduces himself by discussing AI’s relevance to discussions of the past that can break down our preferences for the present.

Author: Davide Liga

Davide Liga is a member of the D4H board and postdoctoral researchers at the University of Luxembourg (FSTM).

Although I am a computer scientist and I don’t have a background on historical studies, I do often think about history in a broad sense. By day, I am an AI researcher and it is due to (or thanks to) that position that I see the bias which I tend to regard to history. This bias is known as “chronocentrism.” It’s a special kind of bias that makes people believe that the time in which they live is more crucial than other times.

Unlike many other common biases, chronocentrism only makes sense from a diachronic perspective. This particular diachronic feature, combined with people’s tendency to see history as following specific directions—where certain past steps become crucial for future achievements—explains why chronocentrism so easily takes hold in our beliefs.

Here arises a question: can we really know whether our time is more important than others? I don’t think so, but that’s why I am calling it a belief.

I also believe that humanity has entered a crucial stage and AI is at the core of these changes.

I was lucky enough to start my PhD at the exact beginning of the Large Models era, in an era before ChatGPT existed. At that time, I used pre-trained models like word2vec—an early example of pre-trained large model—and BERT, before moving to generative models like GPT. After my PhD, I continued working on large models and generative AI, which gradually became the protagonists of the recent AI wave.

At the Department of Computer Science (DCS), I have worked in two groups, namely the Individual and Collective Reasoning (ICR) group and the new CLAiM group (Computational Law and Machine Ethics). These two groups are very much interested in how AI systems interact with societal, legal, ethical norms.

More specifically, my focus is in on the creation, alignment, manipulation and benchmarking of Large Models, using them in scenarios where their reasoning skills are crucial (e.g. argumentation, legal/normative reasoning, dialogues).

Now, I am the coordinator of the D4H-DTU where my interest on Large Models is flowing into different directions which I am currently exploring together with some members of the DTU:

- Large Models as a historical construct. The information Large Models channel tend to reflect existing perceptions and biases. Given that people are relying more and more on these models to get information, this also implies that Large Models have the power to build the current perceptions and potentially shape people’s beliefs and ideas. In this regard, I am interested in aligning them to follow our human values and norms. I am also interested in the use of Large Models as a support for the analysis of narratives (public opinion, propaganda, sentiment manipulation). This support can involve, for example, the extraction of information from the past and present infosphere.

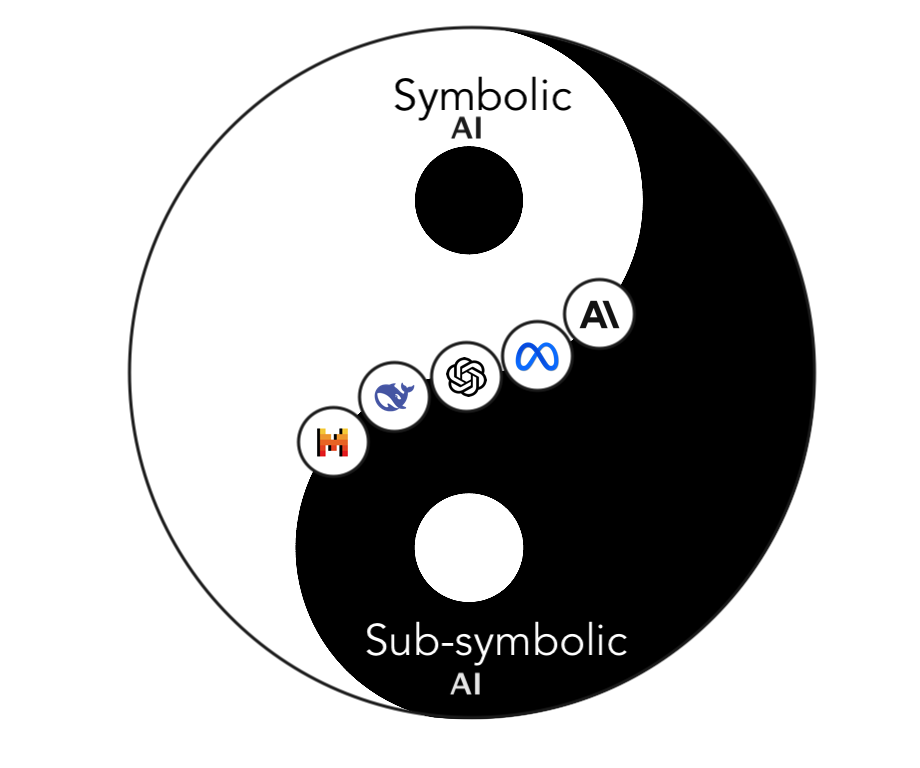

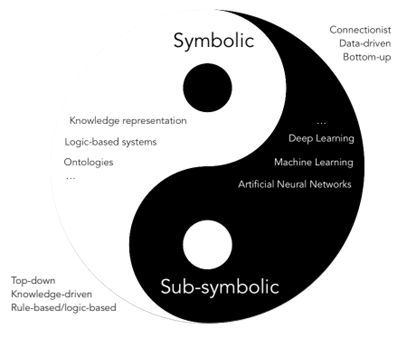

- Testing/benchmarking the emergence of Artificial General Intelligence (AGI). I am currently benchmarking some capabilities of LLMs like their reasoning capabilities and flaws. Because of this, I’ve recently gotten interested in the new field of Mechanistic Interpretability, which tries to make sense of how “symbolic” features (e.g., the capability to think and reason symbolically and logically) emerge from the sub-symbolic nature of Large Models (i.e. from the multidimensional numerical/vectorial representations encoded within the neural models’ weights and neuronal activations). Figure 1 shows how some people (myself included) like to see the dichotomy between Symbolic and Subsymbolic AI paradigms.

- LLMs are also a support tool for historians. They can facilitate some processes that were once very difficult to tackle like the extraction, analysis, curation, or even the augmentation of digitized data.

These are only some of the directions in which I am interested, and I am honored to be part of a DTU where many brilliant minds are focusing on similar or related research directions. The interdisciplinary environment of D4H is perfect to explore the intricate connections of various levels of analysis, including the computational, the societal, and the historical ones.

That’s why I am sure this journey in the DTU will be not only pleasant but also full of intellectual and human growth.